Scale-Calibrated Post-Training Quantization for

Vision-Language-Action Models

Scale-Calibrated Post-Training Quantization for

Vision-Language-Action Models

QuantVLA enables efficient robot learning through quantization.

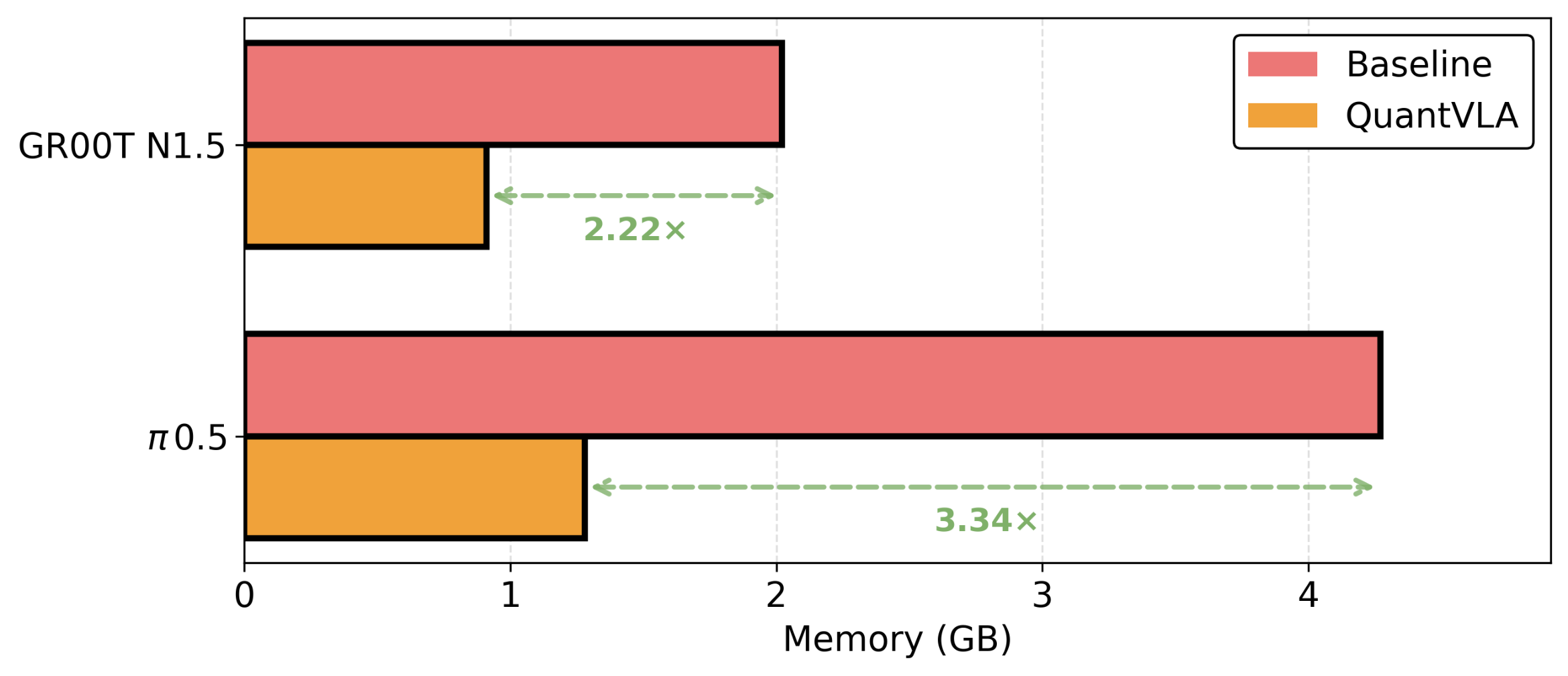

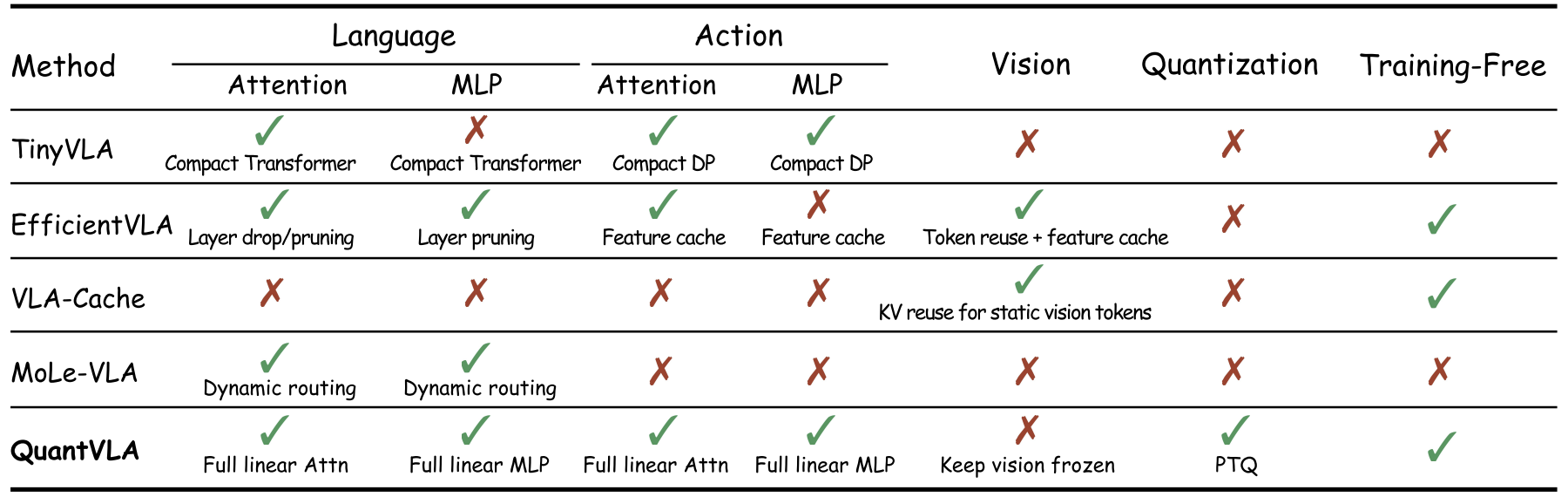

Vision-language-action (VLA) models unify perception, language, and control for embodied agents but face significant challenges in practical deployment due to rapidly increasing compute and memory demands, especially as models scale to longer horizons and larger backbones. To address these bottlenecks, we introduce QuantVLA, a training-free post-training quantization (PTQ) framework that, to our knowledge, is the first PTQ approach for VLA systems and the first to successfully quantize a diffusion transformer (DiT) action head. QuantVLA incorporates three scale-calibrated components: (1) a selective quantization layout that integerizes all linear layers in both the language backbone and the DiT while keeping attention projections in floating point to preserve the original operator schedule; (2) attention temperature matching, a lightweight per-head scaling mechanism that stabilizes attention logits and is folded into the dequantization scales at inference; and (3) output head balancing, a per-layer residual interface calibration that mitigates post-projection energy drift. The framework requires no additional training, uses only a small unlabeled calibration buffer, and supports integer kernels for low-bit weights and activations while leaving the architecture unchanged. Across representative VLA models on LIBERO, QuantVLA exceeds the task success rates of fully-precision baselines, achieves about 70% relative memory savings on the quantized components, providing a practical pathway toward scalable low-bit embodied intelligence under strict compute, memory, and power constraints.

LIBERO Benchmark — Success Rate (%)

| Base Model | Method | Precision | Layer Selection | Spatial | Object | Goal | Long | Avg |

|---|---|---|---|---|---|---|---|---|

| π0.5 | Baseline | FP16 | – | 98.50 | 99.00 | 97.50 | 93.50 | 97.10 |

| π0.5 | +SmoothQuant | W8A8 | LLM | 97.50 | 98.50 | 98.00 | 92.50 | 96.60 |

| π0.5 | +SmoothQuant | W8A8 | LLM + DiT(MLP) | 98.00 | 99.00 | 99.00 | 92.00 | 97.00 |

| π0.5 | +DuQuant | W4A8 | LLM | 98.00 | 98.50 | 97.50 | 92.00 | 96.50 |

| π0.5 | +DuQuant | W4A8 | LLM + DiT(MLP) | 98.00 | 97.00 | 94.50 | 92.00 | 95.40 |

| π0.5 | +QuantVLA | W4A8 | LLM | 98.50 | 99.00 | 96.50 | 96.50 | 97.60 |

| π0.5 | +QuantVLA | W4A8 | LLM + DiT(MLP) | 98.50 | 98.00 | 98.00 | 96.00 | 97.60 |

| GR00T | Baseline | FP16 | – | 92.00 | 92.00 | 86.00 | 76.00 | 86.50 |

| GR00T | +DuQuant | W4A8 | LLM | 86.00 | 92.00 | 80.00 | 80.00 | 84.50 |

| GR00T | +DuQuant | W4A8 | LLM + DiT | 66.00 | 70.00 | 68.00 | 76.00 | 70.00 |

| GR00T | +QuantVLA | W4A8 | LLM | 96.00 | 94.00 | 92.00 | 66.00 | 87.00 |

| GR00T | +QuantVLA | W4A8 | LLM + DiT | 96.00 | 92.00 | 90.00 | 74.00 | 88.00 |

Qualitative and quantitative results demonstrating the efficiency and accuracy of QuantVLA will be presented here. The method relies on post-training quantization to reduce model size while maintaining VLA capabilities.

We present QuantVLA, the first PTQ framework for VLA models that surpasses full precision baselines without any additional training. Using a selective layout, it integerizes the language backbone and the feedforward blocks of the diffusion transformer while attention projections remain in floating point. Two lightweight calibration scalars align the attention temperature and restore the output energy, thereby stabilizing low-bit inference. As a result, QuantVLA reduces memory usage and improves accuracy. Overall, QuantVLA is training-free, preserves the original architecture, and is robust across modalities, offering a practical path to low-bit deployment and laying the groundwork for future advances, lower power budgets, and reliable long-horizon generation.

@article{zhang2026quantvla,

title={QuantVLA: Scale-Calibrated Post-Training Quantization for Vision-Language-Action Models},

author={Zhang, Jingxuan and Hsieh, Yunta and Wang, Zhongwei and Lin, Haokun and Wang, Xin and Wang, Ziqi and Lei, Yingtie and Zhang, Mi},

journal={arXiv preprint arXiv:2602.20309},

year={2026}

}